usual user

Members-

Posts

537 -

Joined

-

Last visited

Recent Profile Visitors

The recent visitors block is disabled and is not being shown to other users.

-

Efforts to develop firmware for H96 MAX V56 RK3566 4G/32G

usual user replied to Hqnicolas's topic in Rockchip CPU Boxes

The first iteration of mainline kernel driver support has just been posted. So a kernel build with this patch set applied should give a playground for initial experiments. -

It happened a few days ago that I rebuilt my complete firmware package to try something with another device. An HC4 firmware binary also automatically falls out in this process. If you like, you can put it on a microSD card (dd bs=512 seek=1 conv=notrunc,fsync if=u-boot-meson.bin of=/dev/${entire-device-to-be-used}), place the prepared microSD card in your HC4 and start it with the boot button pressed. Check whether it meets your expectations, and if all tests are successful, you can transfer it to the SPI flash.

-

The one from my firmware build.

-

Since I haven't restarted the M1 for some time, I am currently still at: # uptime 12:56:23 up 115 days, 1:51, 5 users, load average: 1.76, 1.26, 0.92 # uname -a Linux micro-015 6.18.0-65.fc44.aarch64 #1 SMP PREEMPT_DYNAMIC Sun Dec 7 20:40:45 CET 2025 aarch64 GNU/Linux I still get: So nothing to complain about.

-

NanoPC-T6 LTS Reset button doesn't work on 6.18.x kernel

usual user replied to ando's topic in NanoPC T6 LTS

According to the circuit diagram, the reset button is connected to the hardware reset lines, so nothing can prevent forcing the reset state. -

At least that explains the result of the nvme scan command. Now it remains to find out why this is the case, but since improvements are still pending for PCIe support even in the mainline kernel, the question arises whether all of this has already been migrated into the firmware.

-

u-boot-rockchip-spi.bin is a firmware. Out of pure curiosity, what is the result of 'pci enum' on the firmware console?

-

IMHO, OP wants to boot OS from NVME while the firmware is stored in the SPI flash, but he is using firmware in which the necessary support is not enabled.

-

CSC Armbian for RK3318/RK3328 TV box boards

usual user replied to jock's topic in Rockchip CPU Boxes

I usually use Falkon in a Plasma environment with Wayland backend. -

CSC Armbian for RK3318/RK3328 TV box boards

usual user replied to jock's topic in Rockchip CPU Boxes

I haven't looked at this use case for a very long time. I can no longer remember since when it has worked out-of-the-box for me. Since decoder support has been part of the GStreamer framework for a very long time, hardware-supported video decoding works for all browsers that use this framework with the standard packages of the distribution of my choice. When v4lrequest support was still implemented with the out-of-tree patches using the stateful method, it also worked with Firefox out-of-the-box. Just an accordingly patched FFmpeg framework was required. This is likely no longer going to work with the current patches for the FFmpeg framework and requires an additional implementation in Firefox. I suspect, however, that this will only happen after the official inclusion of v4lrequest support in the FFmpeg framework, as is also the case with MPV. To what extent patches for Firefox are already available is unknown to me. For the distribution of my choice, I have in any case rebuilt the FFmpeg and MPV packages with the corresponding patches. I have to confess that I usually use Firefox and the video decoding works flawlessly for my use cases. However, I cannot say whether this is actually hardware-accelerated, because the SBCs I use with a graphical Desktop are powerful enough to function sufficiently even with only software decoding. I'm just taking the lazy way here and waiting for it to end up in Manline. For SBCs that need hardware acceleration, I simply use a browser that uses the GStreamer framework. -

Armbian_24.11.2_Orangepi5_noble_current_6.12.0-kisak NPU driver version

usual user replied to thanh_tan's topic in Rockchip

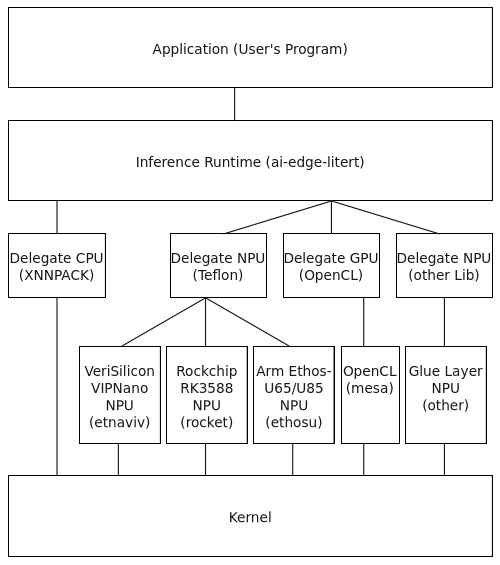

From an OS's point of view, only a Mesa build with Teflon and Rocket driver support is needed (available since Mesa 25.3). Inferences can then be executed with ai-edge-litert. I have been experimenting with this for some time on all my devices equipped with Rockchip RK3588/RK3588s. -

How to use OrangePi 5 Plus's NPU for Image Generation?

usual user replied to Johson's topic in Beginners

I am currently at 7.0.0-rc1. I can upload my jump-start image so you can check if my kernel build works with your device. If you like what you see, it is only a 'prepare-jump-start ${target-mount-point}' away to install the kernel package alongside your existing system. I know about it, but since it is just another not mainline solution with another dependency mess, I am not particularly interested. -

How to use OrangePi 5 Plus's NPU for Image Generation?

usual user replied to Johson's topic in Beginners

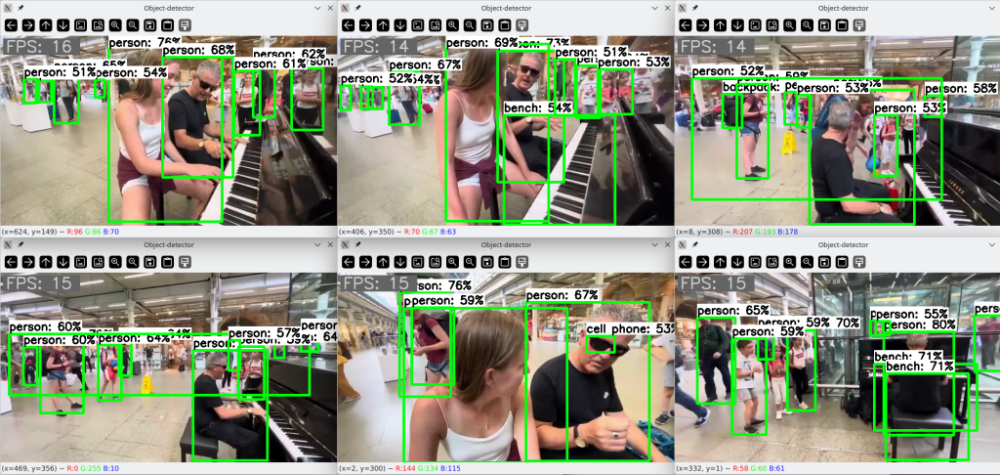

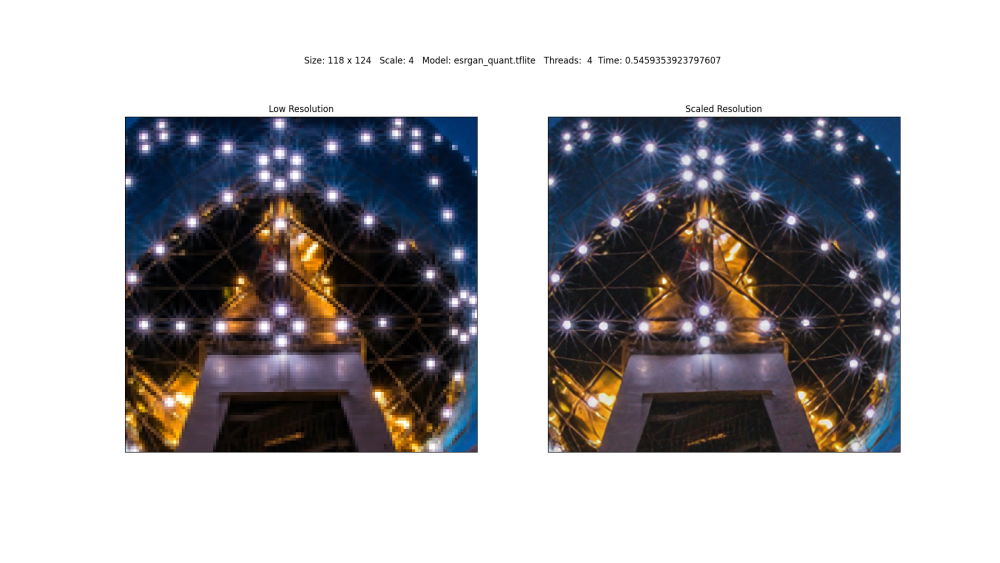

Since the hardware support for Rockchip SoCs in the mainline kernelis generally already very outstanding and their further development is also being actively pursued, I only have SBCs with integrated NPUs that are based on them. Among them are ODROID-M2, NanoPC-T6, and ROCK-5-ITX. But since the NPU is an integral part of the SoC, the board manufacturer and the design of the SBC are not necessarily of importance. As far as I understand, edge-class NPUs are best suited for computer vision tasks. I am therefore engaged in object detection: and super-resolution: -

How to use OrangePi 5 Plus's NPU for Image Generation?

usual user replied to Johson's topic in Beginners

This is what my software stack looks like: My kernel is build as a generic one, hence my OS is working on any device equipped with a VeriSilicon VIPNano, a Rockchip RK3588 or an Arm Ethos-U65/U85 NPU. The application can be written NPU-agnostic, as long as a model.tflite file suitable for the NPU is used.