usual user

Members-

Posts

533 -

Joined

-

Last visited

Content Type

Forums

Store

Crowdfunding

Applications

Events

Raffles

Community Map

Everything posted by usual user

-

How to upgrade Linux to libopus 1.5.1

usual user replied to Celona's topic in Software, Applications, Userspace

Looks like I'm already equipped with it by upgrading behind my back. -

Quite possible, because due to my migration to bootstd, this entire code block no longer exists for me.

-

OK, then you're now on this version and it's not a general issue, it's about how Armbian's firmware is built.

-

If the microSD card in the microSD card slot is the one with the Armbian_24.2.1_Odroidn2_bookworm_current_6.6.16_cinnamon_desktop.img.xz then it is: dd bs=512 seek=1 conv=notrunc,fsync if=odroid-n2-plus/u-boot-meson.bin of=/dev/mmcblk1 It is always the NAME that lsblk enumerates with the TYPE "disk".

-

Out of curiosity, does it boot when you drop in my firmware build? dd bs=512 seek=1 conv=notrunc,fsync if=odroid-n2-plus/u-boot-meson.bin of=/dev/${entire-device-to-be-used}

-

How to use wiringpi python for banana pi cm4 io?

usual user replied to Maverick's topic in Banana PI CM4-IO

Read what the original author posted. Banana Pi CM4 has full generic GPIO support in mainline kernel ready to use. With libgpiod, all the necessary Python 3 bindings are available, so start writing your planned application. Since you have even developed the board yourself, you also have the necessary knowledge of which of the approximately 100 GPIO-capable pins you have wired where. -

How to use wiringpi python for banana pi cm4 io?

usual user replied to Maverick's topic in Banana PI CM4-IO

I'm not sure who really needs the python bindings. Users keep talking about WiringPi not working for their device, but they don't say what functionality they want to use with it. In the isolated cases where they do, it is such trivial applications that can be conveniently operated with the available command line tools. So no need to involve any programming activities. And the same is true for the Sysfs API fraction. The existing command line tools cover the entire Sysfs spectrum and even go beyond. For example, I would like to see how someone implements this one-liner with the sysfs: gpioset --toggle 100ms,100ms,100ms,100ms,100ms,300ms,300ms,100ms,300ms,100ms,300ms,300ms,100ms,100ms,100ms,100ms,100ms,700ms --consumer panic con1-08=active I'm curious about the precise timing to generate such a blinking pattern. Or retrieving a pin for three keystrokes and resulting script execution: gpiomon --num-events=3 --edges=rising con1-08 && bash-script For the users who actually need more complex implementations and have the necessary coding skills, I think they should also be able to install the bindings with: sudo apt install python3-libgpiod And maybe someone wants to use C++ or Rust. But first and foremost, people seeking help should learn to describe the basic problem they are trying to solve, rather than asking for help as they think it can be solved. I don't usually respond to requests that don't match this, because nothing is more annoying than realizing that you have wasted unnecessary time on a solution when it could otherwise have been solved more appropriately. And look, what was the immediate response to my given reference in response to a direct question? In the case of such NOOBs, further engagement is usually not conducive. -

How to use wiringpi python for banana pi cm4 io?

usual user replied to Maverick's topic in Banana PI CM4-IO

The distribution of my choice offers me ready-made binary packages out-of-the-box. However, I have to install the Python 3 bindings separately. I know that the command line tools are apparently also available out-of-the-box in Armbian, but I don't know if the Python 3 bindings are also packaged separately. -

How to use wiringpi python for banana pi cm4 io?

usual user replied to Maverick's topic in Banana PI CM4-IO

What's wrong with libgpiod? -

You have provided far too little context, and I do not understand what you are talking about. But if it's just the output of a pattern waveform via a GPIO, you can do it with a one-liner from the command line: gpioset --toggle 100ms,100ms,100ms,100ms,100ms,300ms,300ms,100ms,300ms,100ms,300ms,300ms,100ms,100ms,100ms,100ms,100ms,700ms --consumer panic con1-08=active This is just an example and the emitted pattern doesn't meet your needs, but with adjusted timings it does exactly what you want. Of course, you can also write your application with program code. Libgpiod provides bindings for C++, python3 and Rust therefor.

-

Overlays are always device-specific, and in most cases, even use-case-specific. Trying to share between different devices takes a huge amount of maintenance as opposed to a simple copy of a similar overlay. All possible interactions have to be taken into account and expecting NOOB users to understand their dependencies is asking for trouble. And if they do have to be different later, it is even more difficult to sort them apart. And besides, what advantage does it bring to the user, since all Armbian images only address one specific device at a time?

-

A perfectly legitimate method, but not always available: This is not necessary, an overlay is just an overlay that overlays what already exists. Run the command "fdtoverlay..." three times in a row, keep DTB backups each time and compare them at last. This is a question for the one who came up with the overlay application method. Mainline kernel usually doesn't come with overlays, they are usually added by the Armbian build system. My method should also work in combination. Judging by the failure message, the overlay can't be found. You have thus proven that there is nothing wrong with the base DTB and the DTBO. It is to blame the tool (method) that is used to apply the overlay. Dynamic application of overlays at boot time is only necessary if the firmare can automatically detect the need to apply an overlay by reading hardware identifiers. This is a method inherited from the Raspberry Pi and has its justification with its expansion modules with hardware identifier. Using a configuration file (armbianEnv.txt) is just another way of statically selecting overlays and basically the same procedure as my method. I understand that overpaid Armbian developers implement something like this method out of boredom for devices that don't need it and then dynamically apply the statically selected overlays. But I prefer to rely on tools (fdtoverlay) that are more mature. After all, it's used in every kernel build (it's part of the kernel sources) and maintained by the mainline Devicetree developers. And if something didn't work with it, it would immediately stand out and also be repaired. Because kernels are very rarely built 😉

-

Chances are, you've destroyed the integrity of the resulting DTB and the kernel can't even access the device with the rootfs. That dtso was more ment in @robertoj's direction to showcase gpio-line-names setup for this device. From the rest of your post, I don't really know what you're talking about. Nevertheless, I have written an overlay from scratch according to your recipe. For a first test, please disable any overlay application using the Armbian method in armbianEnv.txt and apply the overlay with my method. With an copy of your base DTB execute this command: fdtoverlay --input sun50i-h6-orangepi-3-lts.dtb --output sun50i-h6-orangepi-3-lts.dtb sun50i-h6-orangepi-3-lts-spi1.dtbo Now swap the currently used DTB with this one, reboot and test if it works as expected. After a successful test, you can try out the application with the Armbian method. sun50i-h6-orangepi-3-lts-spi1.dtso

-

Out of curiosity and for my own education, I wrote the attached overlay. If you apply this to the base DTB of the orangepi 3 lts, the GPIO code of @robertoj should be able to run unchanged on this device as well. sun50i-h6-orangepi-3-lts-con1.dtso

-

How to wait for a button press is outlined here. If you want to be able to write code that is device-agnostic, you'll need to set up generic gpio-line-names. See here for reference. With that in place e.g.: gpiomon --num-events=1 con1-08 && my-script will be able to run on any device that has the gpio-line-names set up in the same way. I.e. will wait at pin 8 of the first extention connector for an event.

-

Ok, everything is as expected. So attached is the overlay source as promised and here is how to use it: To avoid path information in the commands, I use cd /boot/dtb/allwinner/ as the working directory. To compile the overlay, execute: dtc --in-format dts sun50i-h618-orangepi-zero3-con1.dtso --out sun50i-h618-orangepi-zero3-con1.dtbo This is an one-off action that has only to be repeated when the overlay source changes. To apply the overlay statically, execute: mv sun50i-h618-orangepi-zero3.dtb sun50i-h618-orangepi-zero3-native.dtb fdtoverlay --input sun50i-h618-orangepi-zero3-native.dtb --output sun50i-h618-orangepi-zero3-con1.dtb sun50i-h618-orangepi-zero3-con1.dtbo ln -s sun50i-h618-orangepi-zero3-con1.dtb sun50i-h618-orangepi-zero3.dtb By modifying the symlink, you can now easily switch between both or possibly multiple pre-build variants of DTBs, regardless of which overlay application method your system provides or not. If you don't want pre-built variant support, an in-place application is also possible with: fdtoverlay --input sun50i-h618-orangepi-zero3.dtb --output sun50i-h618-orangepi-zero3.dtb sun50i-h618-orangepi-zero3-con1.dtbo The application of the overlay must always be repeated if the base DTB changes (update). I've attached this feature to my kernel build process so I don't have to worry about it. The kernel install process is also an option to apply local overlays permanently. Of course, you can also use your OS's overlay application system. If you want to modify the label names, use my *.dtso as a template and choose a new overlay filename. In this way, both variants can exist at the same time and you can choose as you wish. Since this overlay only modifies one property value, it can even be applied repeatedly. I.e. with: fdtoverlay --input sun50i-h618-orangepi-zero3.dtb --output sun50i-h618-orangepi-zero3.dtb sun50i-h618-orangepi-zero3-my-labels.dtbo you can simply rewrite the property without any further necessary actions. I'm sorry that my method doesn't require patches or even rebuilding the entire OS and works in every system from userspace that has the DTC software package installed and the firmware get the DTB provided from the userspace (filesystem) for loading. Any system administrator should be able to execute the commands (either symlink adjustment or overlay application) from a running system and activate the changes by rebooting. sun50i-h618-orangepi-zero3-con1.dtso

-

I've written an overlay that I think is correct. Since I don't have a Orange Pi Zero 3 I need your help to verify my work. Therefore I have applied my overlay staticly to your provided DTB. Please replace your existing DTB with the attached one. Reboot and post the "cat /sys/kernel/debug/gpio" output again. If everything works as expected, I will post the overlay source and explain how to use it. sun50i-h618-orangepi-zero3.dtb

-

As allredy stated, a quite simple DTB overlay. See this thread as an entry point for discussions that have already taken place on this topic. More on gpio-line-names assignment can also be found in other posts of mine in this forum. But after the last posts in this thread I have doubts whether I should continue to participate here. IMHO the basic knowledge seems to be lacking, but they think to know exactly what the solution should look like and don't accept any other implementation. Something like this has repeatedly led to inconclusive discussions, which I am no longer prepared to engage in. If you'd like, I can try to walk you through creating a suitable overlay. For a first step, I need the output of cat /sys/kernel/debug/gpio from your running system and the DTB that is currently being used for your system. And last but not least, I need an online link to the DTB source of your device or is this already correct.

-

This thread inspired me to tinker with GPIO again. I was curious to see how I would realize the example for myself. Since python is not my strong point, I chose a poor man's solution with "dnf install libgpiod-utils". I then ran this command: gpioset --toggle 100ms,100ms,100ms,100ms,100ms,300ms,300ms,100ms,300ms,100ms,300ms,300ms,100ms,100ms,100ms,100ms,100ms,700ms --consumer panic con1-08=active I'm wondering now what the flashing code could say, but I think a ham radio operator would know for sure what it means 😉 Recreating the python example wasn't really challenging with: gpioset -t1000 -Cblink-example con1-08=1 And besides, I find my flashing pattern much more interesting. I would be interested to know how something like this would be implemented with sysfs. Certainly not with a one-liner. These commands work the same way on all my different devices, i.e. it is used for all pin 8 of the first expansion connector. I find it more pleasant to address the pins with con1-xx than to deal with some device-specific gpio-lines again and again. Generated code is therefore portable and can be used on any device without modification, provided that the device can provide GPIO functionality on the corresponding pins. The only requirement is that the same gpio-line-name is used for the corresponding GPIO of the extension port. But this can be ensured by a simple DTB overlay. Creating such an overlay is a one-time effort for any device and the first thing I do for a device that I care off.

-

The solution is outlined here but there was no incentive to land it in Armbian.

-

The gst-play-hevc.mp4-pipeline.pdf is the visualisation of the pipeline composed by: gst-play-1.0 --use-playbin3 hevc.mp4

-

FWIW I'm keep puzzled about the options you still throw at mpv. In my Wayland environment, I only have "hwdec=auto" and "hwdec-codecs=all" to automatically enforce video hardware decoding (v4l2 via drm KMS) and take into account all possible hardware decoders. This is necessary because otherwise SW decoding is preferred by default. I also don't understand why you insist on involving the GPU, it doesn't have any business in a video pipeline, as I just demonstrated here. The GPU is only used by the GUI and is therefore secondary. Video playback works perfectly even when no GPU driver is loaded at all.

-

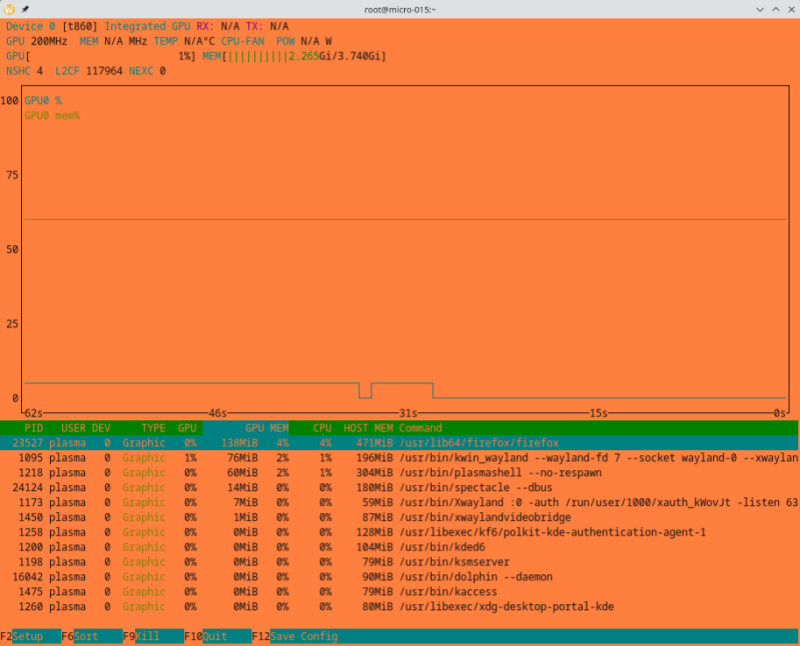

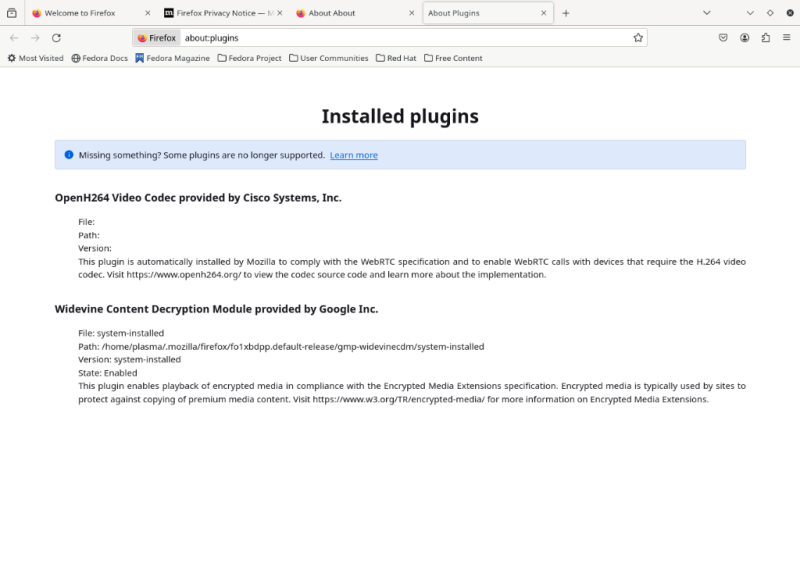

The output of Code "MOZ_GFX_DEBUG=1 MOZ_LOG="PlatformDecoderModule:5" firefox" tells me a different story, see firefox-nanopc-t4.txt for reference. If you don't perform any actions with the graphical UI, nvtop confirms very nicely how the GPU and CPU are idle while Firefox plays a video using the VPU: And this is also nice to have: The VPU support of rk356x still lacks rkvdec2 support, but the Hantro decoder is already quite compliant, while the support of gstreamer and FFmpeg decoders is on pair. See fluster-run-odroid-m1-summary.txt for reference. So a lot is already happening. And all this despite the fact that the distribution of my choice doesn't support any of my devices (not even unofficially). It's all pure mainline, with the exception of the FFmpeg package, which I had to rebuild because the request API support isn't available out-of-the-box there yet. firefox-nanopc-t4.txt fluster-run-odroid-m1-summary.txt

-

RK3399 DWC3 USB3.0 driver failed after recent kernel update

usual user replied to winnt5's topic in Rockchip

FWIW, I've moved to 6.7.0.rc2 and the mainline USB regression can no longer be observed: /: Bus 08.Port 1: Dev 1, Class=root_hub, Driver=ehci-platform/1p, 480M /: Bus 07.Port 1: Dev 1, Class=root_hub, Driver=ohci-platform/1p, 12M |__ Port 1: Dev 2, If 0, Class=Human Interface Device, Driver=usbhid, 1.5M |__ Port 1: Dev 2, If 1, Class=Human Interface Device, Driver=usbhid, 1.5M /: Bus 06.Port 1: Dev 1, Class=root_hub, Driver=xhci-hcd/1p, 5000M |__ Port 1: Dev 2, If 0, Class=Hub, Driver=hub/4p, 5000M |__ Port 4: Dev 3, If 0, Class=Mass Storage, Driver=usb-storage, 5000M /: Bus 05.Port 1: Dev 1, Class=root_hub, Driver=xhci-hcd/1p, 480M |__ Port 1: Dev 2, If 0, Class=Hub, Driver=hub/4p, 480M |__ Port 1: Dev 5, If 0, Class=Vendor Specific Class, Driver=ftdi_sio, 12M |__ Port 2: Dev 6, If 0, Class=Vendor Specific Class, Driver=ftdi_sio, 12M /: Bus 04.Port 1: Dev 1, Class=root_hub, Driver=ohci-platform/1p, 12M /: Bus 03.Port 1: Dev 1, Class=root_hub, Driver=ehci-platform/1p, 480M /: Bus 02.Port 1: Dev 1, Class=root_hub, Driver=xhci-hcd/1p, 5000M |__ Port 1: Dev 2, If 0, Class=Mass Storage, Driver=uas, 5000M /: Bus 01.Port 1: Dev 1, Class=root_hub, Driver=xhci-hcd/1p, 480M But new toys in town to play with: